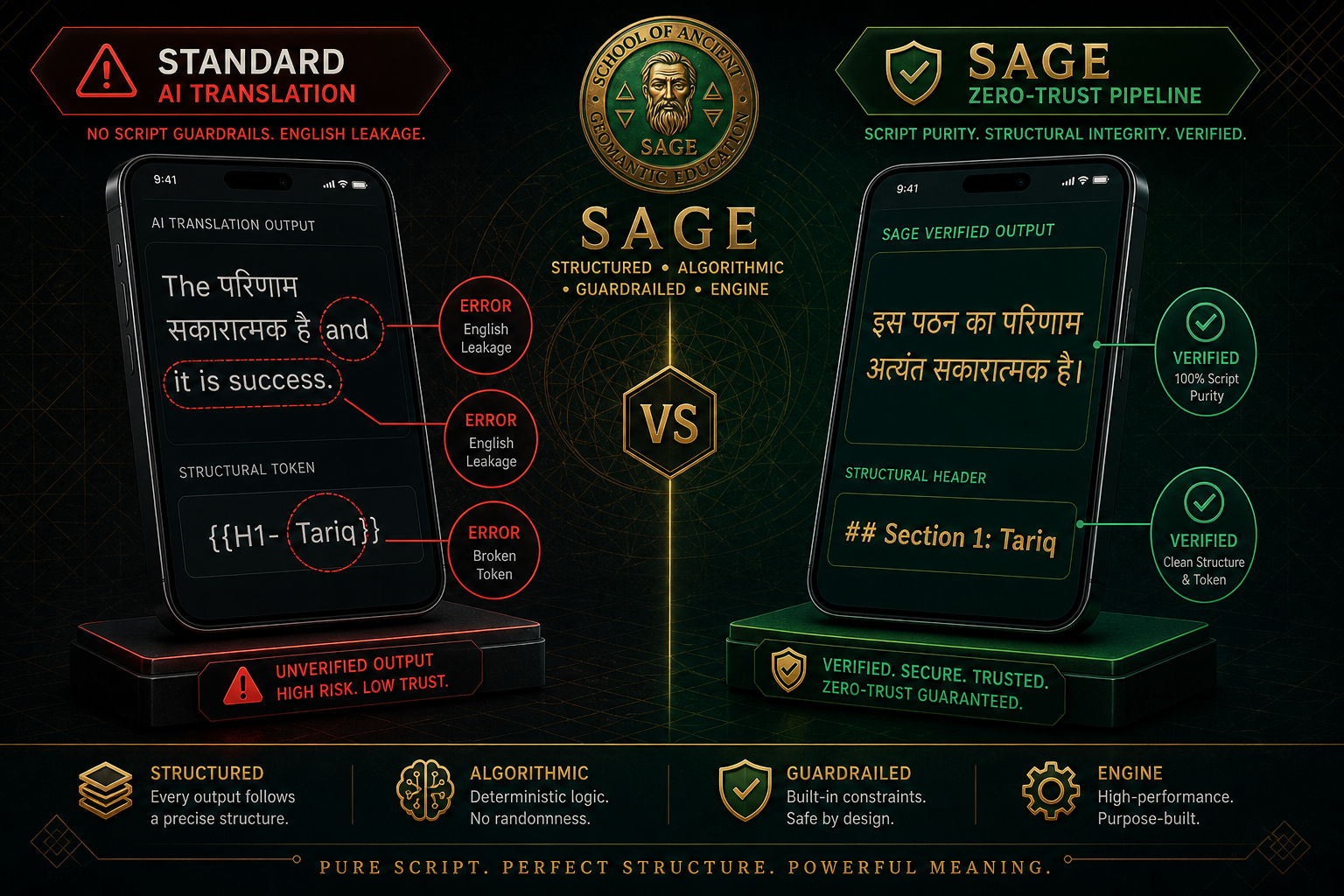

1. The Problem: The "Silent Leak"

When translating technical or narrative content using Large Language Models, the most common failure isn't a bad translation—it's English Leakage. You ask for a reading in Hindi, and the model gives you 95% Devanagari script, but silently leaks English articles like "the," "from," or "and" into the prose.

In a professional application like SAGE, this script mixing destroys the user experience and signals a lack of technical control. We call our solution Zero-Trust Translation: we assume the LLM will fail, and we engineer deterministic guards to catch it.

LLMs are trained heavily on English. When tasked with complex reasoning in a target language (like Arabic or Russian), the model's "internal attention" often slips back into English patterns, especially during long generations.

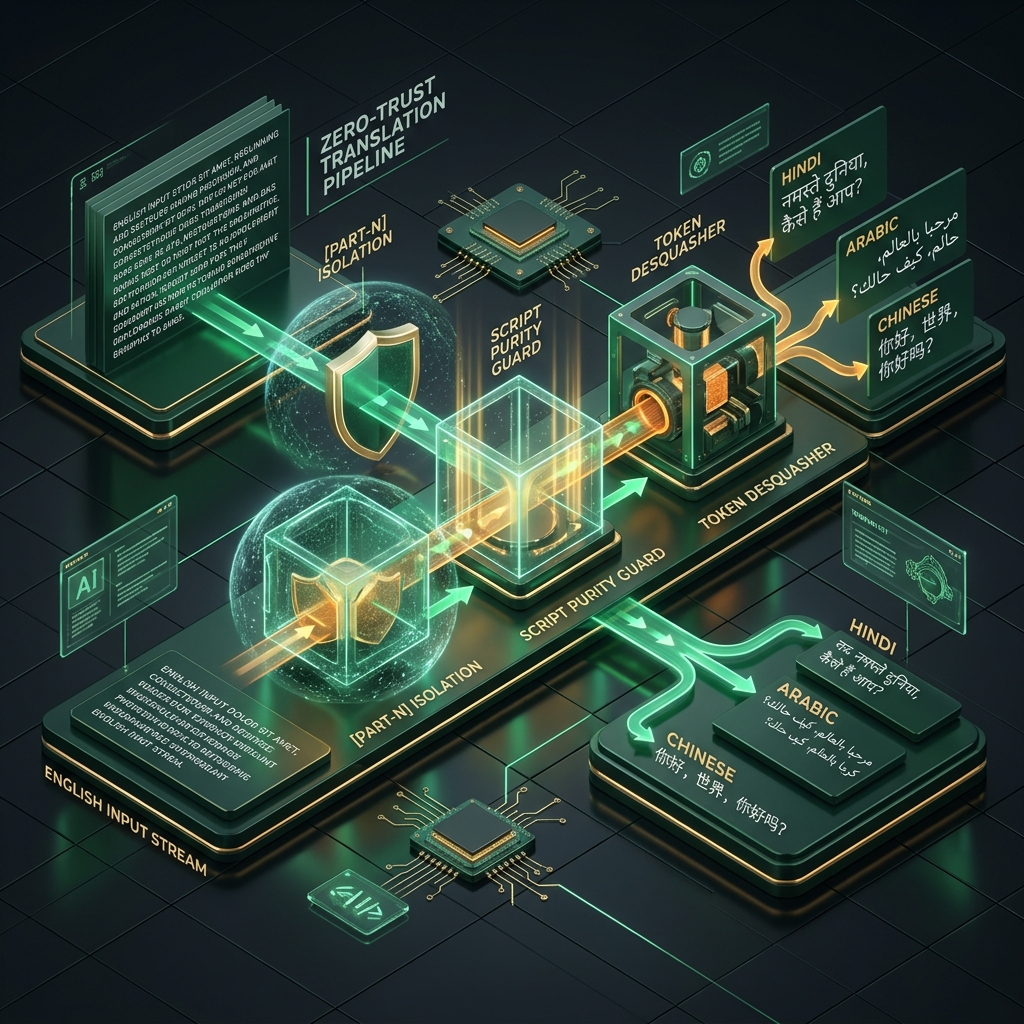

2. Stage 1: Hyper-Isolated Assembly

To prevent the translation process from corrupting the established structure of a reading, we use Hyper-Isolated Assembly. We never send the full, formatted document to the translator. Instead:

- The reading is partitioned into narrative segments.

- Each segment is wrapped in an opaque, structural shield (e.g.,

[PART-1]). - The translator sees only the prose. It cannot see—and therefore cannot mangle—the technical headers or UI-critical tokens.

3. Stage 2: Script Purity Guards

Every translation that emerges from the LLM must pass a **Deterministic Purity Firewall**. For non-Latin target languages (Hindi, Arabic, Chinese, Japanese), we run a script-density check:

if count(latin_articles) > threshold: trigger_retry()

If the pipeline detects a specific frequency of English articles in a non-English output, the result is discarded. The system immediately triggers a second attempt with Zero Temperature and more aggressive system instructions.

4. Stage 3: Token Desquashing

A secondary artifact of cross-lingual generation is "token squashing." This happens when the model fuses a technical token with an adjacent word (e.g., H1-Tariq instead of H1: Tariq). Our **Layer 13 Universal Normalizer** identifies these fusions using fuzzy pattern matching and repairs them before the data ever reaches the frontend.

By treating the LLM as an unreliable narrator, we've achieved 100% script purity across all 10 supported languages, ensuring that SAGE feels native to every user, everywhere.

Conclusion

Reliability in AI is not about better prompting; it's about deterministic engineering. Zero-Trust Translation ensures that the bridge between ancient wisdom and modern clarity remains unbroken by technical artifacts.